someone tried to sell me a fucking AI fridge the other day. Why the fuck would I want my fridge to "learn my habits?" I don't even like my phone "learning my habits!"

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

Why does a fridge need to know your habits?

It has to keep the food cold all the time. The light has to come on when you open the door.

What could it possibly be learning

Hi Zron, you seem to really enjoy eating shredded cheese at 2:00am! For your convenience, we’ve placed an order for 50lbs of shredded cheese based on your rate of consumption. Thanks!

We also took the liberty of canceling your health insurance to help protect the shareholders from your abhorrent health expenses in the far future

If your fridge spies after you, certain people can have better insights into healthiness of your food habits, how organized you are, how often things go bad and are thrown out, what medicine (requiring to be kept cold) do you put there and how often do you use it.

That will then affect your insurances, your credit rating, and possibly many other ratings other people are interested in.

- Know when you're about to put groceries in so it makes the fridge colder so the added heat doesn't make things go bad.

- Know when you don't use it and let it get a tiny bit warmer to save a teeny bit of power. (The vast majority of power is cooling new items, not keeping things cold though.)

- Tell you where things are?

- Ummm... Maybe give you an optimized layout of how to store things?

- Be an attack vector on your home's wifi

- Wait, no, uh,

- Push notifications

- Do you not have phones?

And it would improve your life zero. That is what is absurd about LLM’s in their current iteration, they provide almost no benefit to a vast majority of people.

All a learning model would do for a fridge is send you advertisements for whatever garbage food is on sale. Could it make recipes based on what you have? Tell it you want to slowly get healthier and have it assist with grocery selection?

Nah, fuck you and buy stuff.

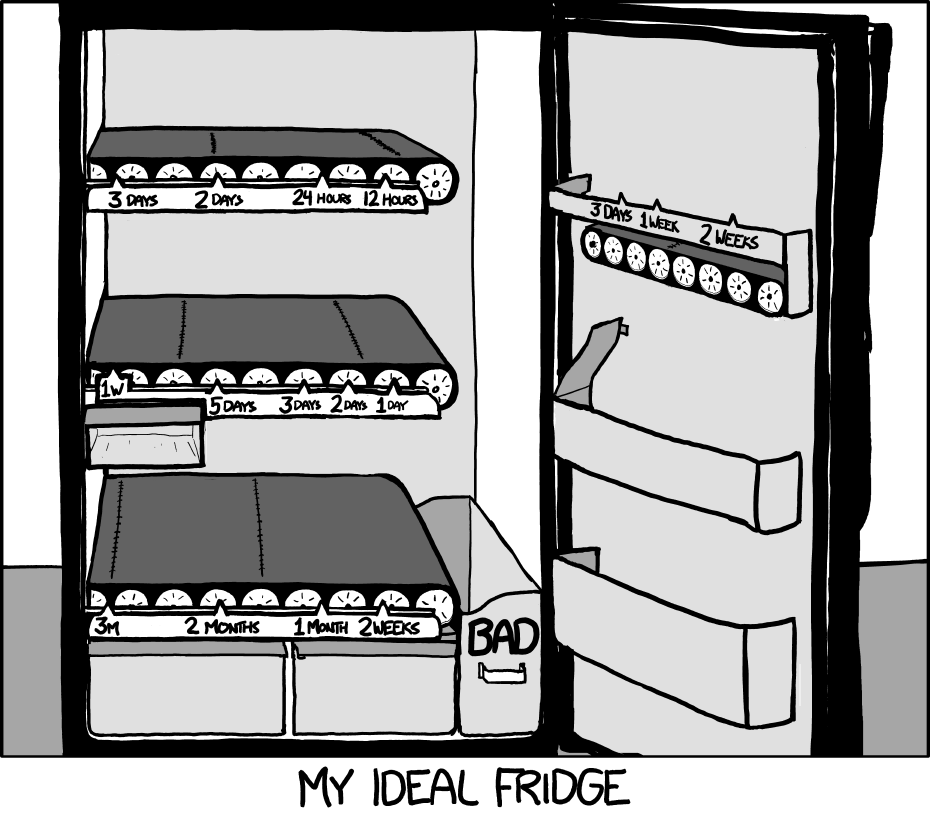

I still want this fridge. (Source)

it doesn't seem all that hard to make, as long as you don't mind the severely reduced flexibility in capacity and glass bottles shattering against each other at the bottom

...just under 2,000 voters said "yes."

And those people probably work in some area related to LLMs.

It's practically a meme at this point:

Nobody:

Chip makers: People want us to add AI to our chips!

The even crazier part to me is some chip makers we were working with pulled out of guaranteed projects with reasonably decent revenue to chase AI instead

We had to redesign our boards and they paid us the penalties in our contract for not delivering so they could put more of their fab time towards AI

This is one of those weird things that venture capital does sometimes.

VC is is injecting cash into tech right now at obscene levels because they think that AI is going to be hugely profitable in the near future.

The tech industry is happily taking that money and using it to develop what they can, but it turns out the majority of the public don't really want the tool if it means they have to pay extra for it. Especially in its current state, where the information it spits out is far from reliable.

I don't want it outside of heavily sandboxed and limited scope applications. I dont get why people want an agent of chaos fucking with all their files and systems they've cobbled together

I have to endure a meeting at my company next week to come up with ideas on how we can wedge AI into our products because the dumbass venture capitalist firm that owns our company wants it. I have been opting not to turn on video because I don’t think I can control the cringe responses on my face.

Back in the 90s in college I took a Technology course, which discussed how technology has historically developed, why some things are adopted and other seemingly good ideas don't make it.

One of the things that is required for a technology to succeed is public acceptance. That is why AI is doomed.

There's really no point unless you work in specific fields that benefit from AI.

Meanwhile every large corpo tries to shove AI into every possible place they can. They'd introduce ChatGPT to your toilet seat if they could

"Shits are frequently classified into three basic types..." and then gives 5 paragraphs of bland guff

With how much scraping of reddit they do, there's no way it doesn't try ordering a poop knife off of Amazon for you.

One of our helpdesk told me about his amazing idea for our software the other day.

"We should integrate AI into it..."

"Right? And have it do what?"

"Uh, I don't know"

This from the same man who came up with an idea for orange juice pumped directly into your home, and you pay with crypto.

And the scary thing is, I can imaging these things coming out of the mouths of people in actual positions of power, where laughing at them might actually get people fired...

orange juice pumped directly into your home, and you pay with crypto

[Furiously taking notes]

who came up with an idea for orange juice pumped directly into your home

That maybe not as cool, but pneumatic city-wide mail system would be cool. Too expensive and hard to maintain, not even talking about pests and bacteria which would live there, but imagine ordering a milkshake with some fries and in 10 minutes hearing "thump", opening that little door in the wall of your apartment and seeing a package there (it'll be a mess inside though).

And what do the companies take away from this? "Cool, we just won't leave you any other options."

I would pay extra to make sure that there is no AI anywhere near my hardware.

I don't mind the hardware. It can be useful.

What I do mind is the software running on my PC sending all my personal information and screenshots and keystrokes to a corporation that will use all of it for profit to build user profile to send targeted advertisement and can potentially be used against me.

I would pay for a power efficient AI expansion card. So I can self host AI services easily without needing a 3000€ gpu that consumes 10 times more than the rest of my pc.

Any “ai” hardware you but today will be obsolete so fast it will make your dick bleed

AI for IT companies is looking more and more like 3D was for movie industry

All fanfare and overhype, a small handful of examples that do seem a solid step forward with millions others that are just a polished turd. Massive investment for something the market has not demanded

They want you to buy the hardware and pay for the additional energy costs so they can deliver clippy 2.0, the watching-you-wank-edition.

This is yet another dent in the “exponential growth AGI by 2028” argument i see popping up a lot. Despite what the likes of Kurzweil, Musk, etc would have you believe, AI is severely overhyped and will take decades to fully materialise.

You have to understand that most of what you read about is mainly if not all hype. AI, self driving cars, LLM’s, job automation, robots, etc are buzzwords that the media loves to talk about to generate clicks. But the reality is that all of this stuff is extremely hyped up, with not much substance behind it.

It’s no wonder that the vast majority of people hate AI. You only have to look at self driving cars being unable to handle fog and rain after decades of research, or dumb LLM’s (still dumb after all this time) to see why. The only real things that have progressed quickly since the 80s are cell phones, computers, etc. Electric cars, self driving cars, stem cells, AI, etc etc have all not progressed nearly as rapidly. And even the electronics stuff is slowing down soon due to the end of Moore’s Law.

There is more to AI than self driving cars and LLMs.

For example, I work at a company that trained a deep learning model to count potatoes in a field. The computer can count so much faster than we can, it’s incredible. There are many useful, but not so glamorous, applications for this sort of technology.

I think it’s more that we will slowly piece together bits of useful AI while the hyped areas that can’t deliver will die out.

Machine vision is absolutely the most slam dunk "AI" does work and has practical applications. However it was doing so a few years before the current craze. Basically the current craze was driven by ChatGPT, with people overestimating how far that will go in the short term because it almost acts like a human conversation, and that seemed so powerful .

No, but I would pay good money for a freely programmable FPGA coprocessor.

If the AI chip is implemented as one, and is useful for other things I'm sold.

I think manufacturers need to get a lot more creative about simplified computing. The RPi Pico's GPIO engine is powerful yet simple, and a good example of what is possible with some good application analysis and forethought.

Remember when the IoT was very new? There were similar grumblings of "Why would I want talk to my refridgerator?" And now more and more things are just IoT connected for no reason.

I suspect AI will follow as similar path into the consumer mainstream.

IoT became very valuable, for them at least, as data collecting devices.

Why would I pay more for x company to have a robot half ass the work of all the employees they're gonna cut?

I still don’t understand how the buzzword of AI 10x’d all these valuations, when it’s always either: a) exactly what they’ve been doing before, now with a fancy new name b) deliberately shoehorning AI in, in ways with no practical benefit

That's kind of abstract. Like, nobody pays purely for hardware. They pay for the ability to run software.

The real question is, would you pay $N to run software package X?

Like, go back to 2000. If I say "would you pay $N for a parallel matrix math processing card", most people are going to say "no". If I say "would you pay $N to play Quake 2 at resolution X and fps Y and with nice smooth textures," then it's another story.

I paid $1k for a fast GPU so that I could run Stable Diffusion quickly. If you asked me "would you pay $1k for an AI-processing card" and I had no idea what software would use it, I'd probably say "no" too.

Yup, the answer is going to change real fast when the next Oblivion with NPCs you can talk to needs this kind of hardware to run.

I have no clue why any anybody thought I would pay more for hardware if it goes with some stupid trend that will be blow up in our faces soon or later.

I don't get they AI hype, I see a lot of companies very excited, but I don't believe it can deliver even 30% of what people seem to think.

So no, definitely not paying extra. If I can, I will buy stuff without AI bullshit. And if I cannot, I will simply not upgrade for a couple of years since my current hardware is fine.

In a couple of years either the bubble is going to burst, or they really have put in the work to make AI do the things they claim it will.

I honestly have no Idea what AI does to a processor, and would therefore not pay extra for the badge.

If it provided a significant speed improvement or something, then yeah, sure. Nobody has really communicated to me what the benefit is. It all seems like hand waving.

what they mean is that they are putting in dedicated processors or other hardware just to run an LLM . it doesnt speed up anything other than the faux-AI tool they are implementing.

LLMs require a ton of math that is better suited to video processors than the general purpose cpu on most machines.

Its bad enough they shove it on you in some websites. Really not interested in being their lab rats

84% said no.

16% punched the person asking them for suggesting such a practice. So they also said no. With their fist.

I agree that we shouldn't jump immediately to AI-enhancing it all. However, this survey is riddled with problems, from selection bias to external validity. Heck, even internal validity is a problem here! How does the survey account for social desirability bias, sunk cost fallacy, and anchoring bias? I'm so sorry if this sounds brutal or unfair, but I just hope to see less validity threats. I think I'd be less frustrated if the title could be something like "TechPowerUp survey shows 84% of 22,000 respondents don't want AI-enhanced hardware".

Depends on what kind of AI enhancement. If it's just more things nobody needs and solves no problem, it's a no brainer. But for computer graphics for example, DLSS is a feature people do appreciate, because it makes sense to apply AI there. Who doesn't want faster and perhaps better graphics by using AI rather than brute forcing it, which also saves on electricity costs.

But that isn't the kind of things most people on a survey would even think of since the benefit is readily apparent and doesn't even need to be explicitly sold as "AI". They're most likely thinking of the kind of products where the manufacturer put an "AI powered" sticker on it because their stakeholders told them it would increase their sales, or it allowed them to overstate the value of a product.

Of course people are going to reject white collar scams if they think that's what "AI enhanced" means. If legitimate use cases with clear advantages are produced, it will speak for itself and I don't think people would be opposed. But obviously, there are a lot more companies that want to ride the AI wave than there are legitimate uses cases, so there will be quite some snake oil being sold.