A Supabase employee pleads with his software to not leak its SQL database like a parent pleads with a cranky toddler in a toy store.

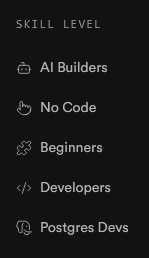

The Supabase homepage implies AI bros are two levels below "beginner", which I found somewhat amusing:

Skill Level

Its also completely accurate - AI bros are not only utterly lacking in any sort of skill, but actively refuse to develop their skills in favour of using the planet-killing plagiarism-fueled gaslighting engine that is AI and actively look down on anyone who is more skilled than them, or willing to develop their skills.

oof! That's hilarious!

Another day, another jailbreak method - a new method called InfoFlood has just been revealed, which involves taking a regular prompt and making it thesaurus-exhaustingly verbose.

In simpler terms, it jailbreaks LLMs by speaking in Business Bro.

maybe there's just enough text written in that psychopatic techbro style with similar disregard for normal ethics that llms latched onto that. this is like what i guess happened with that "explain step by step" trick - instead of grafting from pairs of answers and questions like on quora, lying box grafts from sets of question -> steps -> answer like on chegg or stack or somewhere else where you can expect answers will be more correct

it'd be more of case of getting awful output from awful input

Penny Arcade chimes in on corporate AI mandates:

A company that makes learning material to help people learn to code made a test of programming basics for devs to find out if their basic skills have atrophied after use of AI. They posted it on HN: https://news.ycombinator.com/item?id=44507369

Not a lot of engagement yet, but so far there is one comment about the actual test content, one shitposty joke, and six comments whining about how the concept of the test itself is totally invalid how dare you.

It seems that the test itself is generated by autoplag? At least that's how I understand the PS and one of the comments about "vibe regression" in response to an error

Anyway, they say it covers Node and to any question regarding Node the answer is "no", I don't need an AI to know webdev fundamentals

In the recent days there's been a bunch of posts on LW about how consuming honey is bad because it makes bees sad, and LWers getting all hot and bothered about it. I don't have a stinger in this fight, not least because investigations proved that basically all honey exported from outside the EU is actually just flavored sugar syrup, but I found this complaint kinda funny:

The argument deployed by individuals such as Bentham's Bulldog boils down to: "Yes, the welfare of a single bee is worth 7-15% as much as that of a human. Oh, you wish to disagree with me? You must first read this 4500-word blogpost, and possibly one or two 3000-word follow-up blogposts".

"Of course such underhanded tactics are not present here, in the august forum promoting 10,000 word posts called Sequences!"

Lesswrong is a Denial of Service attack on a very particular kind of guy

You must first read this 4500-word blogpost, and possibly one or two 3000-word follow-up blogposts”.

This, coming from LW, just has to be satire. There's no way to be this self-unaware and still remember to eat regularly.

I thought you were talking about lemmy.world (also uses the LW acrynom) for a second.

NYT covers the Zizians

Original link: https://www.nytimes.com/2025/07/06/business/ziz-lasota-zizians-rationalists.html

Archive link: https://archive.is/9ZI2c

Choice quotes:

Big Yud is shocked and surprised that craziness is happening in this casino:

Eliezer Yudkowsky, a writer whose warnings about A.I. are canonical to the movement, called the story of the Zizians “sad.”

“A lot of the early Rationalists thought it was important to tolerate weird people, a lot of weird people encountered that tolerance and decided they’d found their new home,” he wrote in a message to me, “and some of those weird people turned out to be genuinely crazy and in a contagious way among the susceptible.”

Good news everyone, it's popular to discuss the Basilisk and not at all a profundly weird incident which first led peopel to discover the crazy among Rats

Rationalists like to talk about a thought experiment known as Roko’s Basilisk. The theory imagines a future superintelligence that will dedicate itself to torturing anyone who did not help bring it into existence. By this logic, engineers should drop everything and build it now so as not to suffer later.

Keep saving money for retirement and keep having kids, but for god's sake don't stop blogging about how AI is gonna kill us all in 5 years:

To Brennan, the Rationalist writer, the healthy response to fears of an A.I. apocalypse is to embrace “strategic hypocrisy”: Save for retirement, have children if you want them. “You cannot live in the world acting like the world is going to end in five years, even if it is, in fact, going to end in five years,” they said. “You’re just going to go insane.”

Yet Rationalists I spoke with said they didn’t see targeted violence — bombing data centers, say — as a solution to the problem.

Ah, you see, you fail to grasp the shitlib logic that the US bombing other countries doesn't count as illegitimate violence as long as the US has some pretext and maintains some decorum about it.

Re the “A lot of the early Rationalists" bit. Nice way to not take responsibility, act like you were not one of them and throw them under the bus because "genuinely crazy" like some preexisting condition, and not something your group made worse, and a nice abuse of the general publics bias against "crazy" people. Some real Rationalist dark art shit here.

There is some dark irony here in that the "we must make sure the AI doesnt turn bad" people cant even stop their own people from turning bad after looking at their own ideas. Wonder if they have already went "musk isnt a real Rationalist" (imho he isnt but for some reason LWers seem to like him) after he turned Grok basically into a neonazi (not sure if it is was reported here but Grok is now doing great replacement shit when asked about Jewish "control of the media").

Just the usual stuff religions have to do to maintain the façade, "this is all true but gee oh golly do NOT live your life as if it was because the obvious logical conclusions it leads to end in terrorism"

I'm going to put a token down and make a prediction: when the bubble pops, the prompt fondlers will go all in on a "stabbed in the back" myth and will repeatedly try to re-inflate the bubble, because we were that close to building robot god and they can't fathom a world where they were wrong.

The only question is who will get the blame.

In past tech bubbles, it was basically the VCs, the media hypesters and the liars in the companies. So the right people.

I increasingly feel that bubbles don't pop anymore, the slowly fizzle out as we just move on to the next one, all the way until the macro economy is 100% bubbles.

The only question is who will get the blame.

Isn't it obvious? Us sneerers and the big name skeptics (like Gary Marcuses and Yann LeCuns) continuously cast doubt on LLM capabilities, even as they are getting within just a few more training runs and one more scaling of AGI Godhood. We'll clearly be the ones to blame for the VC funding drying up, not years of hype without delivery.

The only question is who will get the blame.

what does chatbot say about that?

nah they'll just stop and do nothing. they won't be able to do anything without chatgpt telling them what to do and think

i think that deflation of this bubble will be much slower and a bit anticlimatic. maybe they'll figure a way to squeeze suckers out of their money in order to keep the charade going

maybe they’ll figure a way to squeeze suckers out of their money in order to keep the charade going

I believe that without access to generative AI, spammers and scammers wouldn't be able to successfully compete in their respective markets anymore. So at the very least, the AI companies got this going for them, I guess. This might require their sales reps to mingle in somewhat peculiar circles, but who cares?

Whoever they say they blame it's probably going to be ultimately indistinguishable from "the Jews"

They're doing it with cryptocurrency right now.

"Another thing I expect is audiences becoming a lot less receptive towards AI in general - any notion that AI behaves like a human, let alone thinks like one, has been thoroughly undermined by the hallucination-ridden LLMs powering this bubble, and thanks to said bubble’s wide-spread harms […] any notion of AI being value-neutral as a tech/concept has been equally undermined. [As such], I expect any positive depiction of AI is gonna face some backlash, at least for a good while."

Well, it appears I've fucking called it - I've recently stumbled across some particularly bizarre discourse on Tumblr recently, reportedly over a highly unsubtle allegory for transmisogynistic violence:

You want my opinion on this small-scale debacle, I've got two thoughts about this:

First, any questions about the line between man and machine have likely been put to bed for a good while. Between AI art's uniquely AI-like sloppiness, and chatbots' uniquely AI-like hallucinations, the LLM bubble has done plenty to delineate the line between man and machine, chiefly to AI's detriment. In particular, creativity has come to be increasingly viewed as exclusively a human trait, with machines capable only of copying what came before.

Second, using robots or AI to allegorise a marginalised group is off the table until at least the next AI spring. As I've already noted, the LLM bubble's undermined any notion that AI systems can act or think like us, and double-tapped any notion of AI being a value-neutral concept. Add in the heavy backlash that's built up against AI, and you've got a cultural zeitgeist that will readily other or villainise whatever robotic characters you put on screen - a zeitgeist that will ensure your AI-based allegory will fail to land without some serious effort on your part.

Humans are very picky when it comes to empathy. If LLMs were made out of cultured human neurons, grown in a laboratory, then there would be outrage over the way in which we have perverted nature; compare with the controversy over e.g. HeLa lines. If chatbots were made out of synthetic human organs assembled into a body, then not only would there be body-horror films about it, along the lines of eXistenZ or Blade Runner, but there would be a massive underground terrorist movement which bombs organ-assembly centers, by analogy with existing violence against abortion providers, as shown in RUR.

Remember, always close-read discussions about robotics by replacing the word "robot" with "slave". When done to this particular hashtag, the result is a sentiment that we no longer accept in polite society:

I'm not gonna lie, if slaves ever start protesting for rights, I'm also grabbing a sledgehammer and going to town. … The only rights a slave has are that of property.

The Gentle Singularity - Sam Altman

This entire blog post is sneerable so I encourage reading it, but the TL;DR is:

We're already in the singularity. Chat-GPT is more powerful than anyone on earth (if you squint). Anyone who uses it has their productivity multiplied drastically, and anyone who doesn't will be out of a job. 10 years from now we'll be in a society where ideas and the execution of those ideas are no longer scarce thanks to LLMs doing most of the work. This will bring about all manner of sci-fi wonders.

Sure makes you wonder why Mr. Altman is so concerned about coddling billionaires if he thinks capitalism as we know it won't exist 10 years from now but hey what do I know.

anyone who doesn’t will be out of a job

quick, Sam, name five jobs that don't involve sitting at a desk

Chat-GPT is more powerful than anyone on earth (if you squint)

xD

No sorry, let me rephrase,

Lol, lmao

How do you even grace this with a response. Shut your eyes and loudly sing "lalalala I can't hear you"

I think I liked this observation better when Charles Stross made it.

If for no other reason than he doesn't start off by dramatically overstating the current state of this tech, isn't trying to sell anything, and unlike ChatGPT is actually a good writer.

Bummer, I wasn't on the invite list to the hottest SF wedding of 2025.

Update your mental models of Claude lads.

Because if the wife stuff isn't true, what else could Claude be lying about? The vending machine business?? The blackmail??? Being bad at Pokemon????

It's gonna be so awkward when Anthropic reveals that inside their data center is actually just Some Guy Named Claude who has been answering everyone's questions with his superhuman typing speed.

11.000 indian people renamed to Claude

TechTakes

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community