*pig turned into a frog

chat is this kafkaesque

This paragraph caught my interest. It used some terms I wasn’t familiar with, so I dove in.

Ego gratification as a de facto supergoal (if I may be permitted to describe the flaw in CFAImorphic terms)

TL note: “CFAI” is this “book-length document” titled “Creating Friendly AI 1.0: The Analysis and Design of Benevolent Goal Architectures”, in case you forgot. It’s a little difficult to quickly distill what a supergoal is, despite it being defined in the appendix. It’s one of two things:

-

A big picture type of goal that might require making “smaller” goals to achieve. In the literature this is also known as a “parent goal” (vs. a “child goal”)

-

An “intrinsically desirable” world (end) state, which probably requires reaching other “world states” to bring about. (The other “world states” are known as “subgoals”, which are in turn “child goals”)

Yes, these two things look pretty much the same. I’d say the second definition is different because it implies some kind of high-minded “desirability”. It’s hard to quickly figure out if Yud actually ever uses the second definition instead of the first because that would require me reading more of the paper.

is a normal emotion, leaves a normal subjective trace, and is fairly easy to learn to identify throughout the mind if you can manage to deliberately "catch" yourself doing it even once.

So Yud isn’t using “supergoal” on the scale of a world state here. Why bother with the cruft of this redundant terminology? Perhaps the rest of the paragraph will tell us.

Anyway this first sentence is basically the whole email. “My brain was able to delete ego gratification as a supergoal”.

Once you have the basic ability to notice the emotion,

Ah, are we weaponising CBT? (cognitive behavioral therapy, not cock-and-ball torture)

you confront the emotion directly whenever you notice it in action, and you go through your behavior routines to check if there are any cases where altruism is behaving as a de facto child goal of ego gratification; i.e., avoidance of altruistic behavior where it would conflict with ego gratification, or a bias towards a particular form of altruistic behavior that results in ego gratification.

Yup we are weaponising CBT.

All that being said, here’s what I think. We know that Yud believes that “aligning AI” is the most altruistic thing in the world. Earlier I said that “ego gratification” isn’t something on the “world state” scale, but for Yud, it is. See, his brain is big enough to change the world, so an impure motive like ego gratification is a “supergoal” in his brain. But at the same time, his certainty in AI-doomsaying is rooted in belief of his own super-intelligence. I’d say that the ethos of ego-gratification has far transcended what can be considered normal.

Author works on ML for DeepMind but doesn’t seem to be an out and out promptfondler.

Quote from this post:

I found myself in a prolonged discussion with Mark Bishop, who was quite pessimistic about the capabilities of large language models. Drawing on his expertise in theory of mind, he adamantly claimed that LLMs do not understand anything – at least not according to a proper interpretation of the word “understand”. While Mark has clearly spent much more time thinking about this issue than I have, I found his remarks overly dismissive, and we did not see eye-to-eye.

Based on this I'd say the author is LLM-pilled at least.

However, a fruitful outcome of our discussion was his suggestion that I read John Searle’s original Chinese Room argument paper. Though I was familiar with the argument from its prominence in scientific and philosophical circles, I had never read the paper myself. I’m glad to have now done so, and I can report that it has profoundly influenced my thinking – but the details of that will be for another debate or blog post.

Best case scenario is that the author comes around to the stochastic parrot model of LLMs.

E: also from that post, rearranged slightly for readability here. (the [...]* parts are swapped in the original)

My debate panel this year was a fiery one, a stark contrast to the tame one I had in 2023. I was joined by Jane Teller and Yanis Varoufakis to discuss the role of technology in autonomy and privacy. [[I was] the lone voice from a large tech company.]* I was interrupted by Yanis in my opening remarks, with claps from the audience raining down to reinforce his dissenting message. It was a largely tech-fearful gathering, with the other panelists and audience members concerned about the data harvesting performed by Big Tech and their ability to influence our decision-making. [...]* I was perpetually in defense mode and received none of the applause that the others did.

So also author is tech-brained and not "tech-fearful".

Ok so in arabic it’s exactly how this guy wants it

reminded me of this

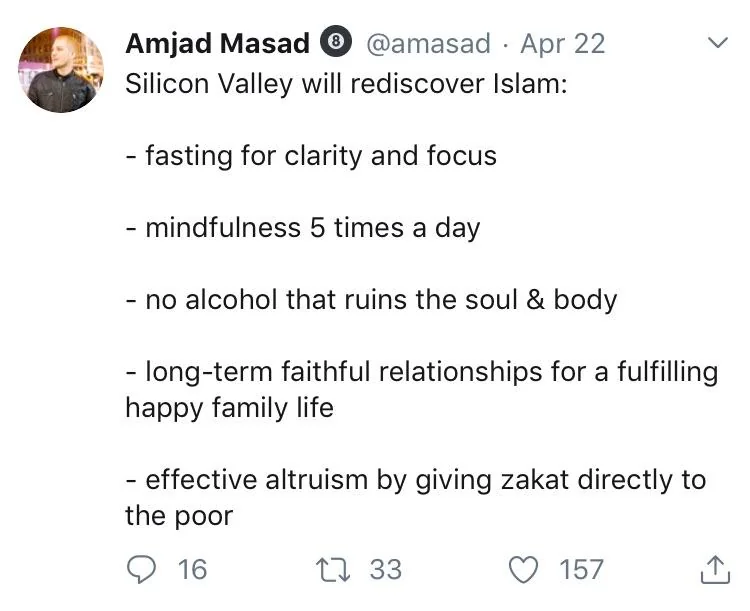

text of image/tweet

@amasad. Apr 22

Silicon Valley will rediscover Islam:

- fasting for clarity and focus

- mindfulness 5 times a day

- no alcohol that ruins the soul & body

- long-term faithful relationships for a fulfilling happy family life

- effective altruism by giving zakat directly to the poor

Yeah exactly. Loving the dude's mental gymnastics to avoid the simplest answer and instead spin it into moralising about promptfondling more good

would I happier if I abandoned my scruples? I hope I or nobody I know finds out.

I think it's accurate that the US and England didn't join to stop the Holocaust. Sentence 3 is a little oversimplified, and sentence 4 is straight-up lunacy.

all of his works are socrappy dialogues (crappy socratic dialogues). This one is an unsubtle exploration of his BDSM fetish

in the slatestar club. straight up 'sleight of mouthing it'. and by 'it', haha, well. let's justr say. My peanits.

This repetitive, tautological, meaningless, tautologous, redundant tautology really gets my goat!

swlabr

0 post score0 comment score

Wow, that highlighting really emphasises the insidious, nefarious behaviour. This is only a hop, skip, and jump away from, what was it again? Rhomboid? Rheumatoid bactothefuture?