As a Linux user of almost 30 years, compiling hundreds of kernels over the years has given me a great appreciation of pre-build kernels, and a profound gratitude for those who package them up into convenient distros that work out of the box and let me get on with the rest of my life.

Well said. I originally compiled my own kernels because I thought it was something you just did to use Linux. I also compiled hundreds of them, probably. Now it's stock kernel all the way. Not worth the effort and time and headache.

I used Linux for about a decade from the mid nineties then took a break for a few years. When I came back, every distro kernel was precompiled, it was glorious. There was never a day I said to myself "damn, I miss compiling a kernel".

A wee bit of knowledge and the wisdom to stop doing it.

Back when I was still using Gentoo, configuring your own kernel was a rite of passage. It was kind of fun to try and configure it as minimalist as possible to cut down on the kernel compile time. Also, understanding all the different options and possibilities. And thanks to use flags, you had access to all these different patch sets for the kernel, which took a lot of the pain out of trying things like experimental schedulers or filesystems.

Everybody gangsta until they set their block and filesystem drivers to module.

"Oh, did I need to rebuild the initrd too? Shhheeeeit, can I do that in a chroot from a livedisk or something?"

Bragging rights.

pain

Better lzma performance with xz. 🤪

Years ago (2006-ish), I ran Gentoo on a 300mhz ultra low power system I used for an irc & web server. I gained LOTS of speed and lowered power draw even further while also enabling the hardware acceleration the board had for ssl encryption and video encoding. The whole thing would pull <5 watts and be super stable. It was well worth it.

But now days a Pi zero would trounce it in both low power draw and speed with stock kernels and I don’t really care enough to try to squeeze more out.

Customising the kernel just means something works properly in rare hardware configurations like you described. It's something which he who uses the general hardware (like an X86 desktop) can't easily see or understand because the 'stock' kernel is already working properly.

I do it because I can... I read release notes on every update and once you've configured a kernel for a particular machine you really don't need to touch the config, barring major changes like when PATA and SATA merged. Or of course if I'm adding a new piece of hardware.

I remove everything I don't need and compiling the kernel only takes a couple minutes. I use Gentoo and approach everything on my system the same way - remove the things I don't need to make it as minimal as possible.

Compiling your own kernel also makes it easier when you need to do a git bisect to determine when a bug was introduced to report it or try to fix it. I've also included kernel patches in my build years ago, but haven't needed to do that in a long time.

I used to compile a custom kernel for my phone to enable modules/drivers that weren't included by default by the maintainer.

It's not about performance for me, it's about control.

I run linux-xanmod-anbox for root support in Waydroid (Android on Linux).

And I configured my kernel to support VFIO (Virtual Function Input Output).

So I can fully pass through one of my GPUs to my Ameliorated Windows KVM,

which I use for both work and gaming.

Hows the perf in the VM?

Amazing, basically native speeds,

currently playing Horizon Forbidden West with maxed out graphics and DRS disabled at a steady 60-80 FPS.

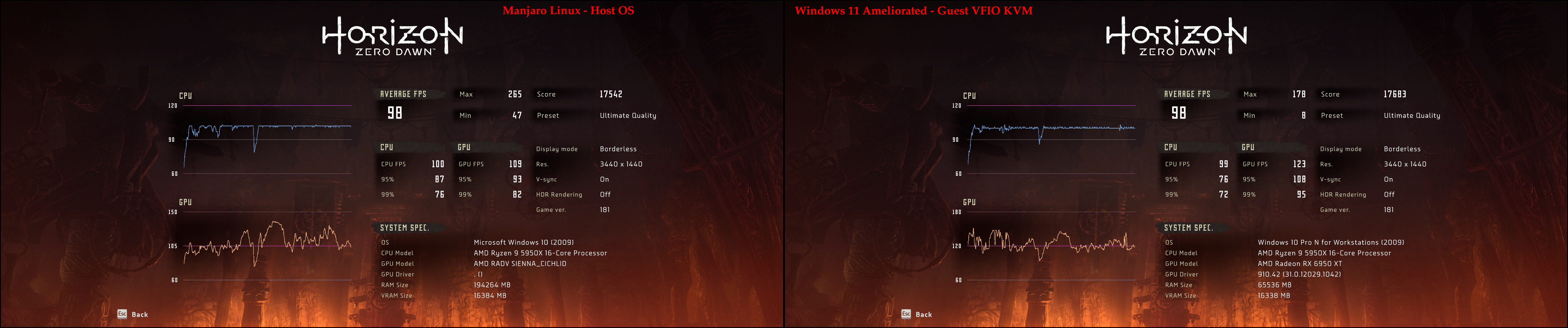

Previously I also played Horizon Zero Dawn in it, also maxed out graphics, steady locked 100 FPS,

below is a benchmark comparison of HZD in the Linux host OS and the Windows KVM guest OS:

Amazing. Does Photoshop work ?

Yush, it does under the KVM :)

Is there an easy way to run this for photoshop? GUI if possible

Has this gotten any easier to do? I set it up a few years ago, it was painful to do and maintain so I let it slide. You were writing all sorts of scripts to specify the passthrough devices and then they'd stop working so you had to track down what was failing and update. Then there was iommu so you had to be careful which groups you added devices to.

Gotta admit, it was very hard to setup initially.

However it's been working perfectly ever since I did.

Been using it for about a year or 2 now.

Also when I linked the Arch wiki,

I noticed in it's article that there's now a gpu-passthrough-manager,

which will likely make the process of setting up a little bit easier.

Well, I can still boot my system without an initram (although that isn't just due to the kernel config)—does that count?

Other than that, custom kernels free up a small amount of disk space that would otherwise be taken up by modules for driving things like CANbus, and taught me a whole lot about the existence of hardware and protocols that I will never use.

Mostly just understanding what was there, what was necessary for my machine at the time and what was optional.

I have configured custom Android kernel builds to enable more USB drivers, enable module support, and tweak various other things. For one tangible example of the result: I could plug in a USB Wi-Fi adapter and use it to simultaneously connect to another Wi-Fi network with the internal NIC while also sharing my own AP over USB. On an Android device of all things. I have also adjusted kernel builds for SBCs (like Pi clones) to get things working at all.

I have never seen any reason to configure a custom kernel for my own desktop/laptop systems. Default builds for the distros I've used have been fine for me; if I'm ever dissatisfied with anything it's the version number rather than the defconfig. The RHEL/Rocky kernel omits a few features I want (like btrfs) but I'd rather stick to other distros on personal systems than tweak a distro that isn't even meant for tweaking.

The secureblue image I use disables numerous kernel modules, and enables many kernel mitigation argument.

The performance impact is minimal, hopefully that means a more secure system? I honestly don't know, nor do I change the default recommended by the developer.

I'm playing around with coreboot and that gives me ability to embed Linux kernel. The problem is we're limited by the amount of ROM chip which is between 4MiB to 16MiB depending on the specific device. The one I'm working on got 12MiB, about 3 is taken in order to boot normally, leaving me with 9 to play around.

Enter buildroot, (arguably) a Linux distro that allows you to have kernel, busybox, minimum libc, along with whatever software you'd choose.

While it's easy to include only what's needed to have a working system (busybox provides working shell as well as the coreutils), you'd need to get rid of stuff you don't need, such as drivers for hardware you wouldn't have.

Aside from that, you'd end up with better running kernel in general if you know what you're doing. I run Gentoo and have kept a working config that I tweak from time to time (especially on version upgrade).

I stopped doing it when Linux got support for kernel modules around Linux 1.2. It was a real game-changer.

I have multiple PCs. One is running a kernel that is mostly monolithic. That means it has only one module (a third party driver).

This made a lot if things more easy and faster, for example, it doesn't need to load an initial RAM disk (initrd) at boot, because it already has all it needs built in and can just mount the root FS and start init. Also all crypto modules are already present when I need them.

The drawback is, I can't unload a module and then load it with different parameters. If I had to change a module param, I would have to change it in the bootloader config and restart (or kexec)

I haven't custom compiled a kernel in ages - does anyone still do this?

It used to be sorta-kinda-necessary back when memory sizes were measured in MB instead of GB. Kernels had to be under a certain size, the module system was a bit slow, memory was at a premium, hardware support was very spotty, etc. I remember applying some guy's patches to a 2.2 kernel to get full-duplex sound on the crappy sound card my Pentium 120 had (Linux has always had garbage audio support).

I think the last time I purposefully created a custom kernel was to enable some experimental scheduler code I hoped would give a performance boost. Was many years ago though.

These days you only do it if you want to learn the process or performance test a system. Or if you're running something like Gentoo - but even then you likely just use the 'default' configuration provided by Gentoo.

Not for myself but a client who was running a game server. He wanted to tweak the number of ticks/second that the kernel interacted with CPU. Didn't even know that this was a parameter and after a few attempts, according to him, never went on that server myself, made a huge difference and he claimed having grabbed a good part of the market because of that.

After that familiarized myself more with the stuff in there. But that was a good while ago, before most of you guys were born.

Just download the devel kernel from your distro and go into make menuconfig. I am on an Intel Laptop with recent hardware. No reason to use amd, nvidia etc drivers. And there is a shitload of likely unmaintained drivers for ancient hardware.

Knowledge and time forced to not be on the computer

The first time I configured the kernel was in Gentoo. The gain from the configuration it self may not have been much, but making my own initramfs image to bundle and load with the kernel taught me a bunch of how linux works in early boot.

I used to manually compile with the Linux-VServer patches, before Debian started shipping a pre-patched kernel.

Linux-VServer was kinda like LXC or OpenVZ. I was using it around 2008 or so as LXC wasn't quite ready for use in production yet (was still far from finished) and OpenVZ didn't support Debian hosts.

I just installed LFS once, which inevitably came with compiling the kernel. Many times, over and over, every time with other configs as some packages required them. For a dual core Dell Laptop from the 2010 it was surprisingly fast, actually. Still not enjoyable or feasible for my normal systems.

A gentoo install once upon a time... and learning how to configure a kernel. Also a slightly better understanding of kernel module configuration for custom or odd ball hardware and a vague idea of what to look for in hardware support if I want to dig deeper.

I suppose the most tangible benefit I get out of it is embedding a custom initramfs into the kernel and using it as an EFI stub. And I usually disable module loading and compile in everything I need, which feels cleaner. Also I make sure to tune the settings for my CPU and GPU, enable various virtualization options, and force SELinux to always remain active, among other things.

camera drivers. the patch was submitted, but hadn't been merged yet, and I didn't want to wait.

Linux

From Wikipedia, the free encyclopedia

Linux is a family of open source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991 by Linus Torvalds. Linux is typically packaged in a Linux distribution (or distro for short).

Distributions include the Linux kernel and supporting system software and libraries, many of which are provided by the GNU Project. Many Linux distributions use the word "Linux" in their name, but the Free Software Foundation uses the name GNU/Linux to emphasize the importance of GNU software, causing some controversy.

Rules

- Posts must be relevant to operating systems running the Linux kernel. GNU/Linux or otherwise.

- No misinformation

- No NSFW content

- No hate speech, bigotry, etc

Related Communities

Community icon by Alpár-Etele Méder, licensed under CC BY 3.0