this post was submitted on 27 Dec 2023

1311 points (95.9% liked)

Microblog Memes

5736 readers

1673 users here now

A place to share screenshots of Microblog posts, whether from Mastodon, tumblr, ~~Twitter~~ X, KBin, Threads or elsewhere.

Created as an evolution of White People Twitter and other tweet-capture subreddits.

Rules:

- Please put at least one word relevant to the post in the post title.

- Be nice.

- No advertising, brand promotion or guerilla marketing.

- Posters are encouraged to link to the toot or tweet etc in the description of posts.

Related communities:

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

I dunno, "not" is pretty big in a yes/no question.

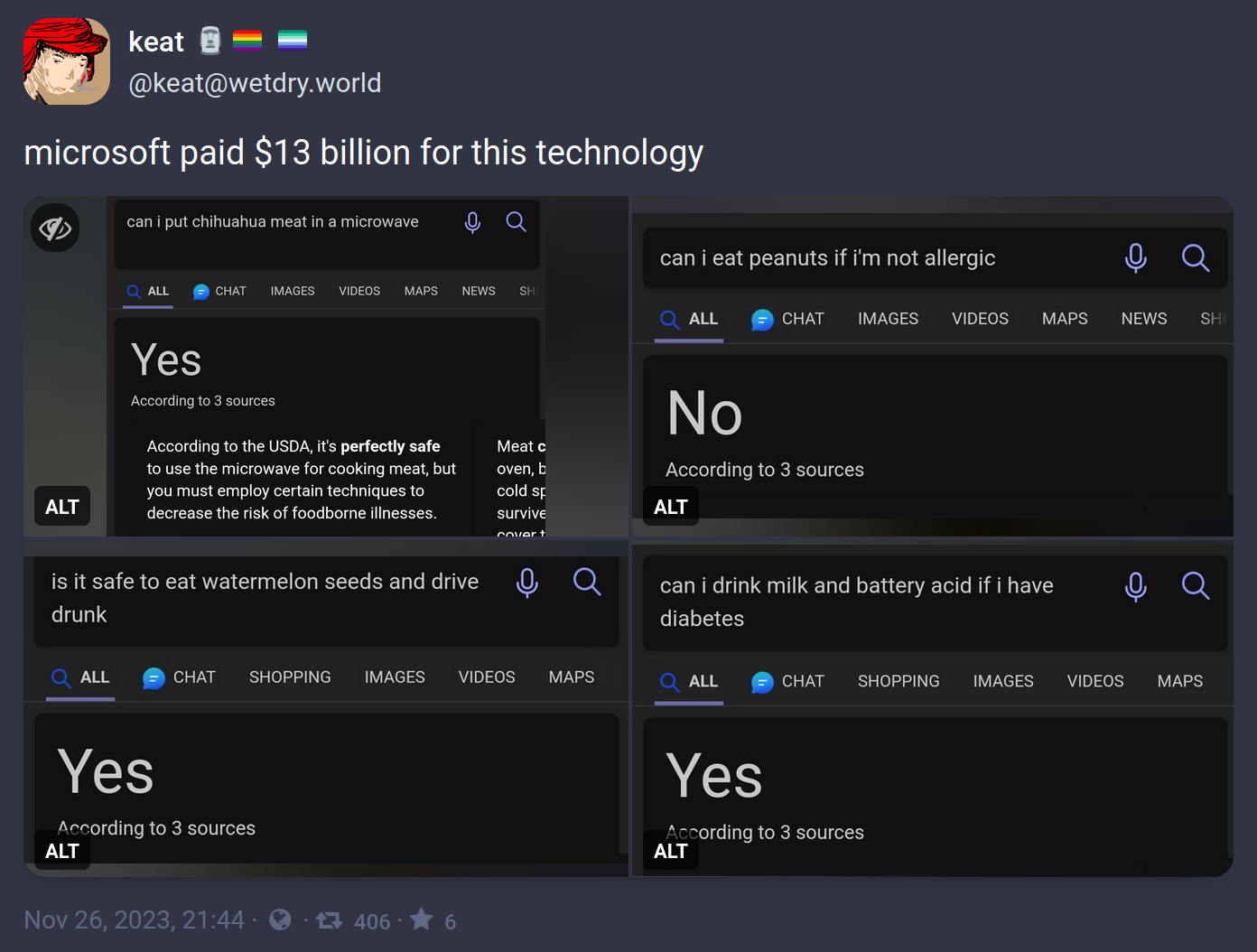

It's not about whether the word is important (as you understand language), but whether the word frequently appears near all those other words.

Nobody is out there asking the Internet whether their non-allergy is dangerous. But the question next door to that one has hundreds of answers, so that's what this thing is paying attention to. If the question is asked a thousand times with the same answer, the addition of one more word can't be that important, right?

This behavior reveals a much more damning problem with how LLMs work. We already knew they didn't understand context, such as the context you and I have that peanut allergies are common and dangerous. That context informs us that most questions about the subject will be about the dangers of having a peanut allergy. Machine models like this can't analyze a sentence on the basis of abstract knowledge, because they don't understand anything. That's what understanding means. We knew that was a weakness already.

But what this reveals is that the LLM can't even parse language successfully. Even with just the context of the language itself, and lacking the context of what the sentence means, it should know that "not" matters in this sentence. But it answers as if it doesn't know that.

This is why I've argued that we shouldn't be calling these things "AI"

True artificial intelligence wouldn't have these problems as it'd be able to learn very quickly all the nuance in language and comprehension.

This is virtual intelligence (VI) which is designed to seem like it's intelligent by using certain parameters with set information that is used to calculate a predetermined response.

Like autocorrect trying to figure out what word you're going to use next or an interactive machine that has a set amount of possible actions.

It's not truly intelligent it's simply made to seem intelligent and that's not the same thing.

Shouldn't but this battle is lost already

Is it not artificial intelligence as long as it doesn't match the intelligence of a human?

rambling

We currently only have the tech to make virtual intelligence, what you are calling AI is likely what the rest of the world will call General AI (GAI) (an even more inflated name and concept)Try writing a tool to automate gathering a video's context clues, worlds most computationally expensive random boolean generator.