Would you rather have a dozen back and forth interactions?

these aren't the only two possibilities. i've had some interactions where i got handed one ref sheet and a sentence description and the recipient was happy with the first sketch. i've had some where i got several pieces of references from different artists alongside paragraphs of descriptions, and there were still several dozen attempts. tossing in ai art just increases the volume, not the quality, of the interaction

Besides, this is something I've heard from other artists, so it's very much a matter opinion.

i have interacted with hundreds of artists, and i have yet to meet an artist that does not, to at least some degree, have some kind of negative opinion on ai art, except those for whom image-generation models were their primary (or more commonly, only) tool for making art. so if there is such a group of artists that would be happy to be presented with ai art and asked to "make it like this", i have yet to find them

Annoying, sure, but not immoral.

annoying me is immoral actually

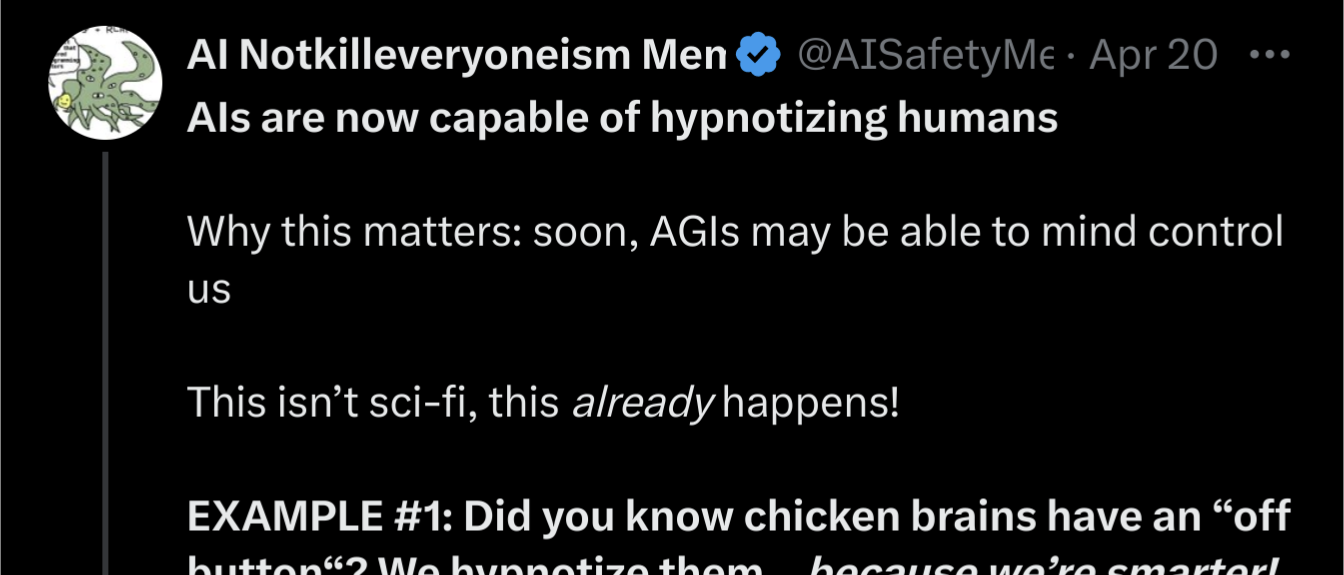

there were bits and pieces that made me feel like Jon Evans was being a tad too sympathetic to Elizer and others whose track record really should warrant a somewhat greater degree of scepticism than he shows, but i had to tap out at this paragraph from chapter 6:

the fact that Jon praises Scott's half-baked, anecdote-riddled, Red/Blue/Gray trichotomy as "incisive" (for playing the hits to his audience), and his appraisal of the meandering transhumanist non-sequitur reading of Allen Ginsberg's Howl as "soulwrenching" really threw me for a loop.

and then the later description of that ultimately rather banal New York Times piece as "long and bad" (a hilariously hypocritical set of adjectives for a self-proclaimed fan of some of Scott's work to use), and the slamming of Elizabeth Sandifer as being a "inferior writer who misunderstands Scott's work", for uh, correctly analyzing Scott's tendencies to espouse and enable white supremacist and sexist rhetoric... yeah it pretty much tanks my ability to take what Jon is writing at face value.

i don't get how after so many words being gentle but firm about Elizer's (lack of) accomplishments does he put out such a full-throated defense of Scott Alexander (and the subsequent smearing of his """enemies"""). of all people, why him?