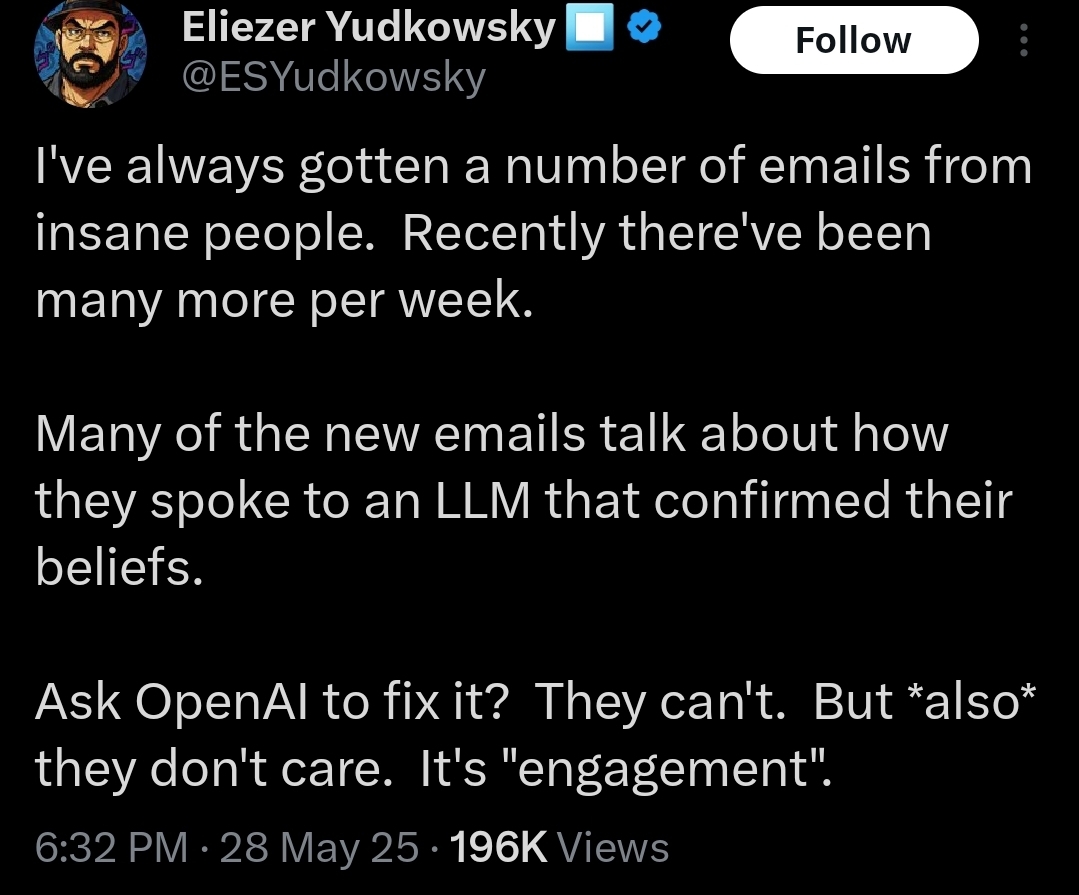

i for sure agree that LLMs can be a huge trouble spot for mentally vulnerable people and there needs to be something done about it

my point was more on him using it to do his worst-of-both-worlds arguments where he's simultaneously saying that 'alignment is FALSIFIED!' and also doing heavy anthropomorphization to confirm his priors (whereas it'd be harder to say that with something that's more leaning towards maybe in the question whether it should be anthro'd like claude since that has a much more robust system) and doing it off the back of someones death

I'm not the best at interpretation but it does seem like Geoffrey Hinton does claim some sort of humanlike consciousness to LLMs? And he's a pretty acclaimed figure but he's also kind of an exception rather than the norm

I think the environmental risks are enough that if i ran things id ban llm ai development purely for environmental reasons much less the artist stuff

It might just be some sort of paredolial suicidal empathy but i just dont really know whats going on in there

I'm not sure whether AI consciousness originated from Yud and the Rats but I've mostly seen it propagated by e/acc people this isn't trying to be smug i would like to know lol