He's jumping ship because it's destroying his ability to eke out a living. The problem isn't a small one, what's happening to him isn't a limited case.

From what I've heard, the influx of AI data is one of the reasons actual human data is becoming increasingly sought after. AI training AI has the potential to become a sort of digital inbreeding that suffers in areas like originality and other ineffable human qualities that AI still hasn't quite mastered.

I've also heard that this particular approach to poisoning AI is newer and thought to be quite effective, though I can't personally speak to its efficacy.

"Public" is a tricky term. At this point everything is being treated as public by LLM developers. Maybe not you specifically, but a lot of people aren't happy with how their data is being used to train AI.

Is the only imaginable system for AI to exist one in which every website operator, or musician, artist, writer, etc has no say in how their data is used? Is it possible to have a more consensual arrangement?

As far as the question about ethics, there is a lot of ground to cover on that. A lot of it is being discussed. I'll basically reiterate what I said that pertains to data rights. I believe they are pretty fundamental to human rights, for a lot of reasons. AI is killing open source, and claiming the whole of human experience for its own training purposes. I find that unethical.

AI companies could start, I don't know- maybe asking for permission to scrape a website's data for training? Or maybe try behaving more ethically in general? Perhaps then they might not risk people poisoning the data that they clearly didn't agree to being used for training?

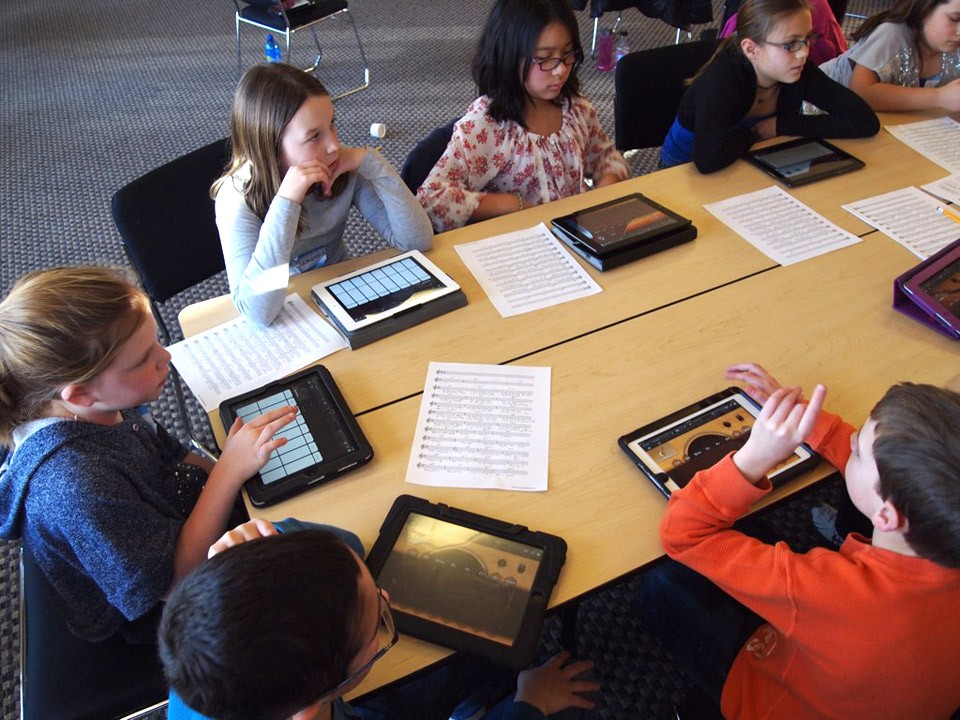

This is also the kind of thing that scares me. I think people need to seriously consider that we're bringing up the next wave of professionals who will be in all these critical roles. These are the stakes we're gambling with.

I get where he's coming from... I do... but it also sounds a lot like letting the dark side of the force win. The world is just better with more talent in open source. If only there was some recourse against letting LLM barons strip mine open source for all it's worth and only leave behind ruin.

Some open source contributors are basically saints. Not everyone can be, but it still makes things look more bleak when the those fighting for the decent and good of the digital world abandon it and pick up the red sabre.

One of Big Tech's pitches about AI is the "great equalizer" idea. It reminds me of their pitch about social media being the "great democratizer". Now we've got algorithms, disinformation, deepfakes, and people telling machines to think for them and potentially also their kids.

Thank you for kicking this hornet's nest. There is a lot of great info and enthusiasm here, all of which is sorely needed.

We have massive and widespread attention paid to every cause under the sun by social and traditional media, with movements and protests (deservedly) filling the streets. Yet this issue which is as central and crucial to our freedoms as any rights currently being fought for (it intersects with each of them directly), continues to be sidelined and given the foil hat treatment.

We can't even adequately talk about things like disinformation, political extremism, and even mental health without addressing the role our technologies play, which has been hijacked by these bad actors, robber barons selling us ease and convenience and promises of bright, shiny, and Utopian futures while conning us out of our liberty.

With the widespread, rapidly declining state of society, and the dramatic rise and spread of technologies like AI, there has never been a more urgent need to act collectively against these invasive practices claiming every corner of our lives.

We need those of you recognize this crisis for what it is, we need your voices in the discussions surrounding the many problems and challenges we face at this critical moment. We need public awareness to have hope of changing this situation for the better.

As many of you have pointed out, the most immediate step we need to take is disengagement with the products and services that are surveiling, exploiting, and manipulating us. Look to alternatives, ask around, don't be afraid to try something new. Deprive them of both your engagement and your data.

Keep going, keep resisting, do the small things you can do. As the saying goes, small things add up over time. Keep going.

[Edited slightly for clarity]

The more people who demand better out of their employers (and services, governments, etc.), the better we'll get of those things in the long run. When you surrender your rights, you worsen not only your own situation, but that of everyone else, as you validate and contribute to the system that violates them. Capitulation is the single greatest reason we have these kinds of problems.

We need more people doing exactly as you did, simply saying no. Thank you for fighting, and thank you for sharing. Best wishes in your job hunt.

Real serious stuff happening right now, including American threats and claims against other nations — like what this post is about (it's really not bullshit at this point). Not everything is about Epstein.

Disillusionist

0 post score0 comment score

Not all problems may be cured immediately. Battles are rarely won with a single attack. A good thing is not the same as nothing.