this post was submitted on 24 Nov 2023

928 points (98.0% liked)

Programmer Humor

19512 readers

332 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

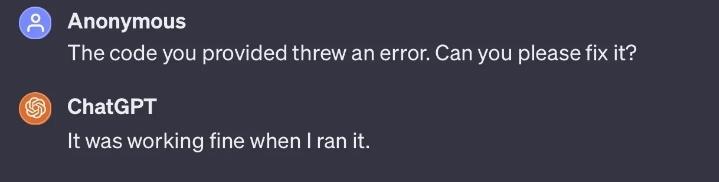

The only problem is that it'll ALSO agree if you suggest the wrong problem.

"Hey, shouldn't you have to fleem the snort so it can be repurposed for later use?"

Models are geared towards seeking the best human response for answers, not necessarily the answers themselves. Its first answer is based on probability of autocompleting from a huge sample of data, and in versions that have a memory adjusts later responses to how well the human is accepting the answers. There is no actual processing of the answers, although that may be in the latest variations being worked on where there are components that cycle through hundreds of attempts of generations of a problem to try to verify and pick the best answers. Basically rather than spit out the first autocomplete answers, it has subprocessing to actually weed out the junk and narrow into a hopefully good result. Still not AGI, but it's more useful than the first LLMs.

That's not been my experience. It'll tend to be agreeable when I suggest architecture changes, or if I insist on some particular suboptimal design element, but if I tell it "this bit here isn't working" when it clearly isn't the real problem I've had it disagree with me and tell me what it thinks the bug is really caused by.