60

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

this post was submitted on 09 Dec 2023

60 points (100.0% liked)

Advent Of Code

1236 readers

1 users here now

An unofficial home for the advent of code community on programming.dev! Other challenges are also welcome!

Advent of Code is an annual Advent calendar of small programming puzzles for a variety of skill sets and skill levels that can be solved in any programming language you like.

Everybody Codes is another collection of programming puzzles with seasonal events.

EC 2025

AoC 2025

Solution Threads

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 |

Rules/Guidelines

- Follow the programming.dev instance rules

- Keep all content related to advent of code in some way

- If what youre posting relates to a day, put in brackets the year and then day number in front of the post title (e.g. [2024 Day 10])

- When an event is running, keep solutions in the solution megathread to avoid the community getting spammed with posts

Relevant Communities

Relevant Links

Credits

Icon base by Lorc under CC BY 3.0 with modifications to add a gradient

console.log('Hello World')

founded 2 years ago

MODERATORS

Thank you!

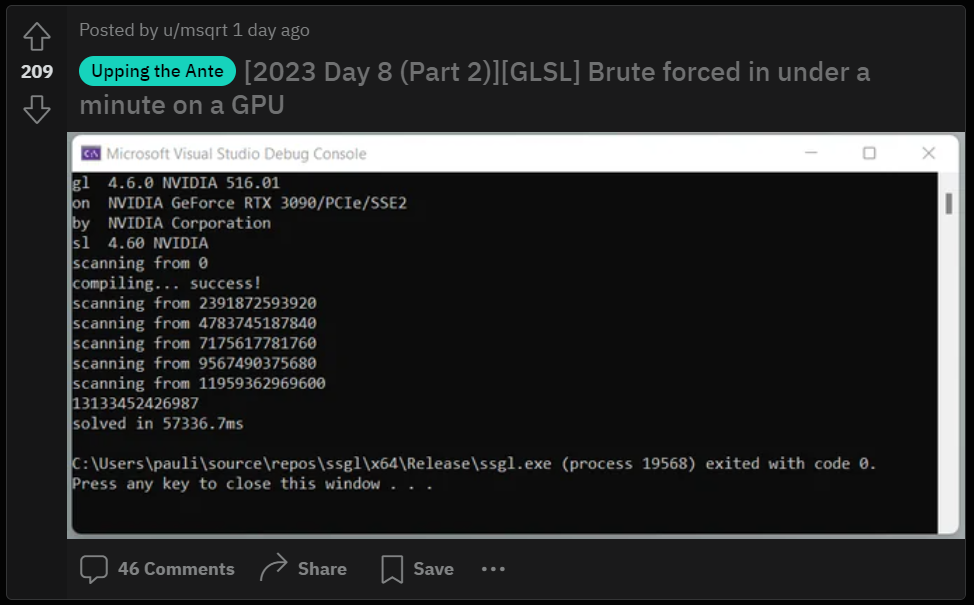

One way to get the data is to render to a (hidden) surface/canvas. It's just bytes to the computer, so just dump the result data in the display buffer. Then you take a "screenshot" and interpret the RGBA values as data.

Graphics Programmer here.

More likely you would just write data to a buffer (basically an array of whatever element type you want) rather than a render target and then read it back to the cpu. Dx, vulkan, etc. all have APIs to upload / download to / from the GPU quite easily, and CUDA makes it even easier, so a simple compute shader or CUDA kernel that writes to a buffer would make the most sense for general purpose computation like an advent of code problem.