this post was submitted on 08 Sep 2023

1264 points (97.9% liked)

linuxmemes

20761 readers

1620 users here now

I use Arch btw

Sister communities:

- LemmyMemes: Memes

- LemmyShitpost: Anything and everything goes.

- RISA: Star Trek memes and shitposts

Community rules

- Follow the site-wide rules and code of conduct

- Be civil

- Post Linux-related content

- No recent reposts

Please report posts and comments that break these rules!

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

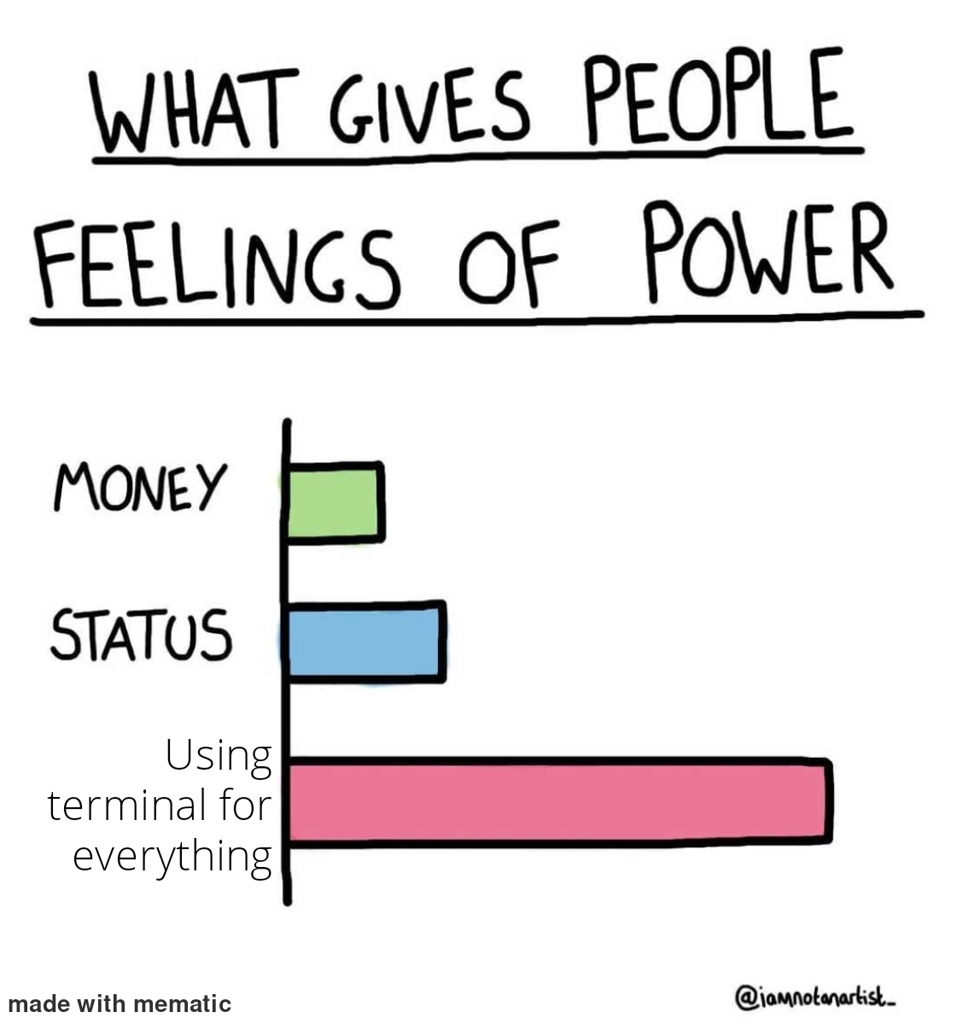

A dirty linux admin here. Imagine you get ssh'd in nginx log folder and all you want to know are all the ips that have been beating againts certain URL in around last let's say last seven days and getting

429most frequent first. In kittie script its likefind -mtime -7 -name "*access*" -exec zgrep $some_damed_url {} \; | grep 429 | awk '{print $3}' | sort | uniq -c | sort -r | lessdepends on how y'r logs look (and I assume you've been managing them - that's where thezgrepcomes from) should be run intmuxand could (should?) be written better 'n all - but my point is - do that for me in gui(I'm waiting ⏲)

As a general rule, I will have most of my app and system logs sent to a central log aggregation server. Splunk, log entries, even cloudwatch can do this now.

But you are right, if there is an archaic server that you need to analyse logs from, nothing beats a find/grep/sed

In splunk this is a pretty straightforward query and can be piped to stats count and sorted. I don't know if you'd exactly count that as gui though.