this post was submitted on 22 Aug 2023

398 points (98.5% liked)

Risa

6918 readers

15 users here now

Star Trek memes and shitposts

Come on'n get your jamaharon on! There are no real rules—just don't break the weather control network.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

This seems to have descended into a debate on "what is consciousness", which as I originally said, is a question that isn't easy to answer. My point was that modern AI inherrently isn't aware of what it's saying, not that it couldn't be defined as an intelligence. As far as I know, there's no solid evidence to prove that it can. To finish, I would like to apologise if my initial comment came across as condescending. I didn't mean to come across as such.

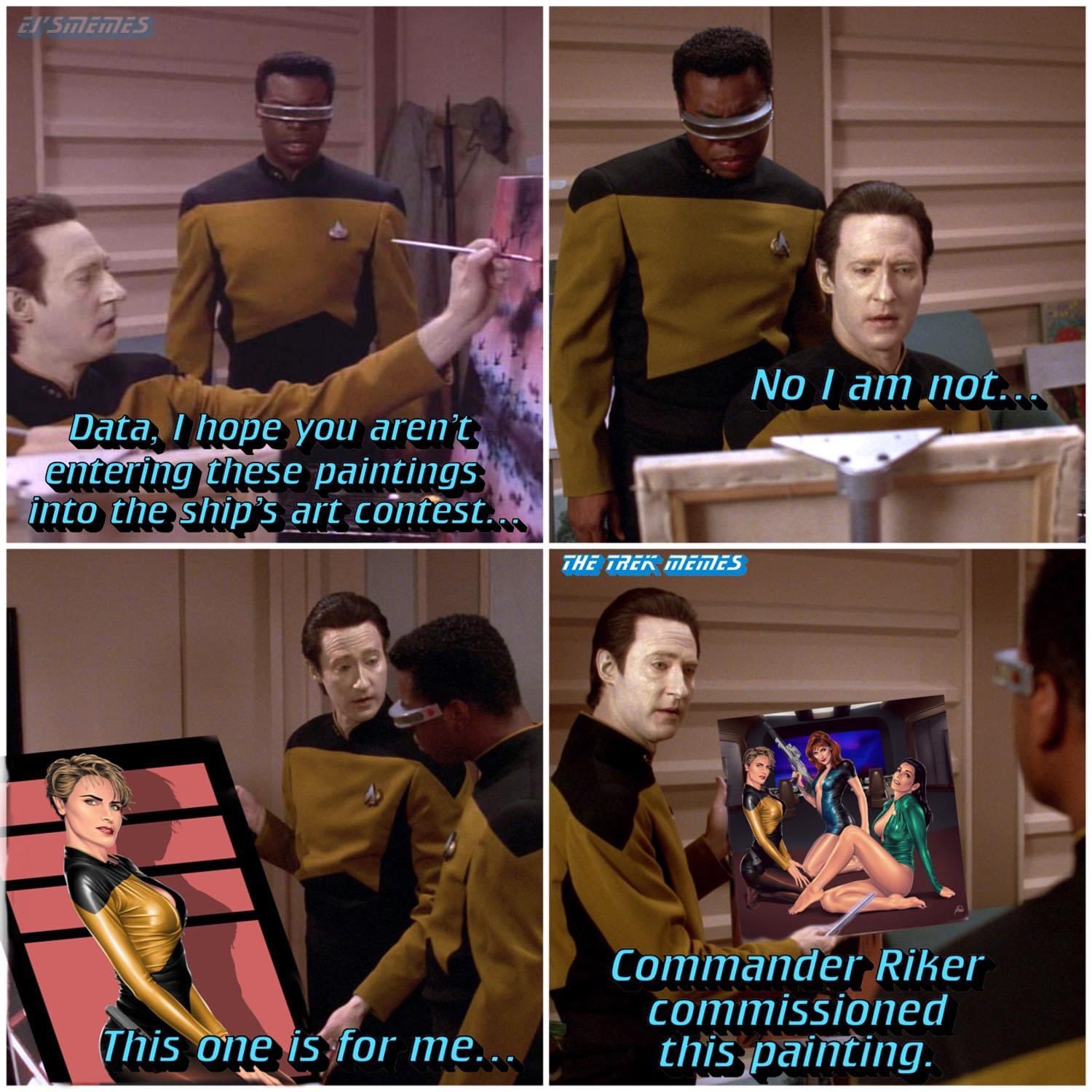

I disagree, while I did go on a tangent there with analyzing ChatGPT capabilities, my ultimate argument was that we shouldn't even be discussing the consciousness topic at all. When deciding whether Data has AI or natural intelligence we only need to look at the source of his intelligence; it was man-made, therefore any painting Data produces is "AI art", because Data only has AI, despite having capabilities on par or even exceeding those of a human.

To be honest, I did take it as being a little condescending, but it doesn't really matter. All I wish is to have a discussion, and expand our knowledge in the process.

Thank you then! It seems like our debate stemmed from different definitions. Based on your definition of what constitutes AI, Data would absolutely count. By my definition, he is too advanced to be in the same category. But I get the impression that we would both agree that he is more advanced than any modern AI system. Once again, I'm sorry for coming across as condescending; I will have to choose my words more carefully in the future!

What are attention mechanisms of not being aware of what it has said so it can inform what it is about to say? Ultimately, I think people saying these generative models aren't really "intelligent" boils down to deciding they don't like the impact these things are having and are going to have on our society and characterizing them as a fancy statistical curve lets people short circuit that much harder conversation.