this post was submitted on 28 Apr 2024

503 points (96.8% liked)

Science Memes

14019 readers

2995 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- !reptiles and [email protected]

Physical Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and [email protected]

- [email protected]

- !self [email protected]

- [email protected]

- [email protected]

- [email protected]

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

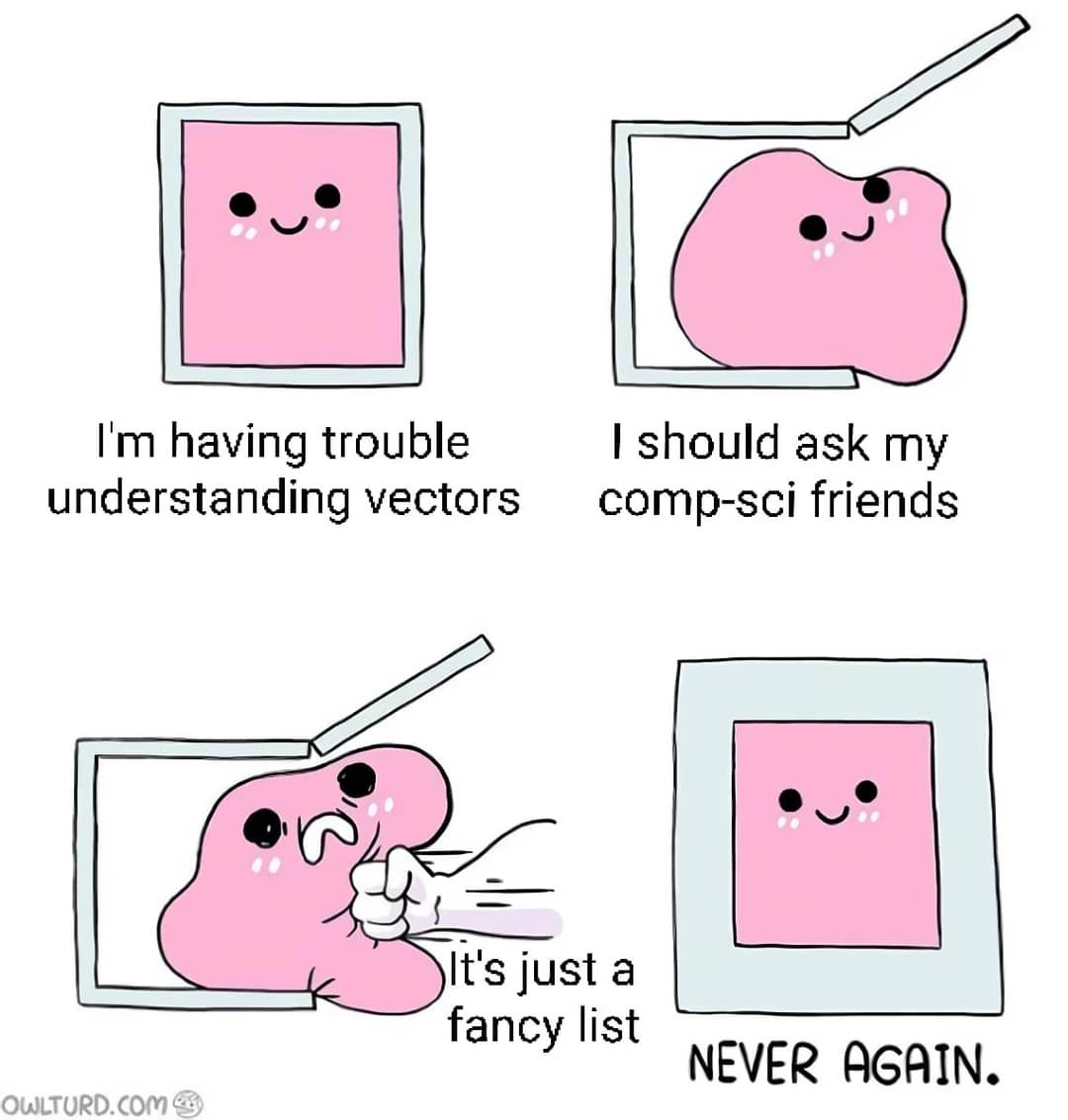

dynamically-sized: The size of it can change as needed.

list: It stores multiple things together.

object: A bit of programmer defined data.

of the same type: all the objects in the list are defined the same way

stored contigiously in memory: if you think of memory as a bookshelf then all the objects on the list would be stored right next to each other on the bookshelf rather than spread across the bookshelf.

Dynamically sized but stored contiguously makes the systems performance engineer in me weep. If the lists get big, the kernel is going to do so much churn.

Contiguous storage is very fast in terms of iteration though often offsetting the cost of allocation

Modern CPUs are also extremely efficient at dealing with contiguous data structures. Branch prediction and caching get to shine on them.

Avoiding memory access or helping CPU access it all upfront switches physical domain of computation.

Which is why you should:

Vecdoubles in size every time it runs out of space)Memory is fairly cheap. Allocation time not so much.

matlab likes to pick the smallest available spot in memory to store a list, so for loops that increase the size of a matrix it's recommended to preallocate the space using a matrix full of zeros!

Is that churn or chum? (RN or M)

Churm